Deepfake is a rising concern in the world of cyber security as it creates hyper-realistic audio, video which could trick people and even machines to be easily manipulated into thinking it as real and authentic and hence causing several consequences of scams and frauds such as impersonation and spreading misinformation.

By smoothly fusing facts and fictions, these malicious actors endanger people, institutions, and communities. One of the most recent cases is the Hong-Kong case where Finance worker pays out $25 million after video call with deepfake ‘chief financial officer’.

What is Deepfake and how is it used?

Deepfake is a combination of “Deep learning “and “fake”, another form of artificial intelligence to make a realistic deception video, image, audio which is generated using an autoencoder, a special kind of neural network which is perfectly trained to copy its inputs into outputs.

Most common AI tool for generating deepfakes is GAN (generative adversarial network). GANs set two neural networks in direct competition with one another – generator and discriminator. Generator produces a new image as an output based on the knowledge that the neural network has been taught. Discriminator determines whether the image is real or fake. Both components stay in constant interaction. Generator learns how to create images that will deceive the discriminator and make it classify a produced image as a real one. Discriminator, on the other hand, learns how not to be deceived. The better the discriminator is, the harder it will be for the generator to make realistic images and, ultimately, the better job it will accomplish.

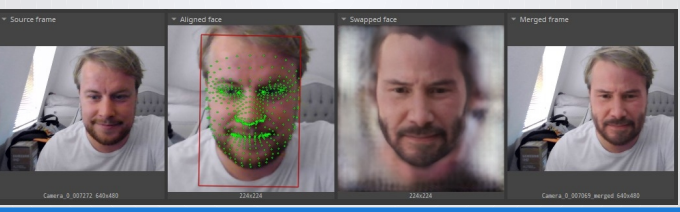

Here is an example of a deepfake photo:

Uses of deepfakes:

Some good applications:

Entertainment: Deepfakes can be used to overlay faces on personalities or reconstruct characters.

Research: Realistic training images, like MRI pictures, can be produced using deepfakes.

Education: By combining video scripts with avatar-based videos, deepfakes can be utilized to provide learning materials.

Customization of the brand: Deepfakes can be used to modify components such as skin tone and ethnicity to better represent a range of consumer demographics.

Political satire: Political satire can be produced with deepfakes.

Deepfakes being used inappropriately:

Disinformation: Deepfakes can be used to spread propaganda or inaccurate information.

Fraud: By using deepfakes to pose as someone else, personally identifiable information can be obtained.

Exploitation: The capacity to produce realistically phony photographs and films poses a threat to people’s reputations. The creation of non-consensual pornographic content is made easier by deepfake AI, which can result in privacy violations, humiliation, and personal injury. There are significant moral and legal issues when deepfakes are used maliciously for character assassination or revenge porn.

Sexually harassing women: Content that is sexually harassing can be produced with deepfakes.

Social engineering and fraud: Deepfake AI can help carry out complex social engineering and fraud schemes. Cybercriminals can trick victims into disclosing private information or completing financial transactions by imitating the voices and looks of authoritative individuals. These kinds of incidents damage security, undermine trust, and result in monetary losses.

Deepfake threats in Cybersecurity:

Social Engineering Attacks:

Impersonation: To trick victims into disclosing private information or sending money, cybercriminals can utilize deepfakes to pose as CEOs, staff members, or other reliable individuals.

Phishing: Phishing techniques can use deepfake audio or video messages to make fraudulent interactions seem real.

Disinformation Campaigns: Deepfakes can be used to convey misleading information in an effort to sway public opinion, undermine institutions, or interfere with political processes. A decline in confidence in the media and information sources may result from this.

To harm people’s or organizations’ reputations, malicious actors may produce deepfake content. For instance, a fake video purporting to show a CEO making provocative remarks might cause serious financial loss and damage to the CEO’s reputation.

Cybercriminals can create compromising deepfake content and utilize it for extortion and blackmail.

The Hong Kong Incident:

Hong Kong authorities announced a case in early February 2024 in which a multimillion-dollar fraud was carried out using deepfake technology. Using deepfakes to pose as the chief financial officer and other important employees of a multinational company, the scammers set up a video conference call and persuaded a finance employee to send almost $25 million.

Initially, the finance clerk was not very sure about making the transactions but after attending the video meeting with his superiors he was convinced that he was making the right call. The worker was convinced to transfer money after the “fake boss” instructed him to do so.This fraud incident was discovered when the employee later checked with the corporation’s head office.

This unfortunate incident could have been avoided by verifying Identities Independently. Even if a video call appears legitimate, it’s crucial to confirm the identities of participants through separate communication channels. For instance, contacting the supposed caller via a known phone number or official email can help verify authenticity.

Police investigations revealed that the deepfakes likely relied on publicly available company photos, videos and audio to digitally recreate the likenesses and voices of executives.

How can we tackle deepfakes?

1.By using better AI detection technology:we can spot fake media without even comparing it with the real one. And stop it from spreading and being misused even without lots of hassle.By using advanced technology we could identify facial or vocal inconsistencies, evidence of the deepfake generation process, or color abnormalities.Also, by using multiple detection and authentication together we can fight and reduce the attacks of deepfakes.

2.By staying alert and spreading awareness: Organizations should always train their valued employees specially those who could be a potential target of deepfakes and make them aware about the attacks and make sure they are prepared and ready with incident response plan to cope with deepfake disasters. There should be regular awareness program and training for individuals in the organizations.

Employees should be trained to identify and take action against any fishing emails and suspicious threats.

3.Legal and Regulatory Compliance: Deepfake crimes can be reduced by making and enforcing strict laws, penalizing the criminals. Although there are several laws in different countries against deepfakes still they need to be stringent.

Govt. should criminalize the creation and distribution of malicious deepfakes and categorize them in top level crimes. Additionally, there should be an ease for the victims to report and seek justice against these crimes.

Last but not the least there should be some authorities from all over the world which come together to deal with cross-border deepfake crimes as these cutting-edge crimes could take place from any corner of the world.

Legal justice should take inspiration from cases like the Hong Kong incident to ensure laws address real-world scenarios and challenges.

What Can we do as individuals?

1.We can use MFA (Multi factor authentication) for better security and use strong and unique passwords.

2.We should be updated about the technological threats and the cases going on so that if we ever encounter anything similar to that we can react spontaneously .

3.We should always verify the resources, shouldn’t trust blindly on anything (If we receive anything that might look suspicious, look for the origin).

4.We should keep our detection tool updated.

5.We should immediately report to any deepfakes we have come across.

Deepfakes are a double-edged sword because they combine innovation with serious dangers. The situation in Hong Kong emphasizes the necessity of increased oversight, stricter laws, and preventative actions. The incident exposed the flaws in the current legal framework and underlined how crucial it is to adopt comprehensive legislation in order to properly address fakes usage. Our safeguards against its abuse must advance along with technology.

Cybersecurity is being threatened by deepfake technology in today’s digital environment. It’s critical to keep up with the most recent advancements in deepfake technology and the risks involved in order to safeguard both our company and us. Gaining knowledge about the production and distribution of deepfakes can improve our capacity to identify and address possible risks.

It’s also crucial to put strong verification procedures in place. Creating a multi-step authentication procedure that involves internal approval processes and verbal verification is part of this, particularly for transactions or conversations containing sensitive data. We may improve our defenses against deepfake-related risks and help create a safer online environment by using these measures.

Thankyou !

References:

1. CVisionLab. (2020, April 8). Deepfake (Generative adversarial network) | CVisionLab. https://www.cvisionlab.com/cases/deepfake-gan/

2. Prem, S. (2023b, July 15). Unmasking the dual nature of deepfake AI: examining its negative impact on society and the cyber world alongside positive potential. Medium. https://medium.com/@sidharthprem310/unmasking-th-e-dual-nature-of-deepfake-ai-examining-its-negative-impact-on-society-and-the-cyber-afb445f95571

3. Liz. (2024, November 29). How to protect from the rising threat of deepfakes in cyber. CYPFER. https://cypfer.com/the-rising-threat-of-deepfakes-in-cybersecurity/

4.Ortiz, M. A. (2024, February 14). $25 Million Stolen Using Deepfakes in Hong Kong: Incode’s Passive Liveness Technology, Shield Against Advanced Fraud. Incode. https://incode.com/blog/25-million-deepfake-fraud-hong-kong/

5 .Zvelo. (2024, April 29). Deepfakes: Escalating threats and countermeasures. Zvelo, Inc. https://zvelo.com/deepfake-threats-and-countermeasures/

6. Young, K. (2024, February 12). Cyber Attack case study: Deepfake scammers con company. CoverLink Insurance – Ohio Insurance Agency. https://coverlink.com/case-study/cyber-attack-case-study-deepfake-scammers-con-company/

7.Science & Tech Spotlight: Combating Deepfakes. (2024, March 11). U.S. GAO. https://www.gao.gov/products/gao-24-107292

8. Butts, D. (2024, May 28). Deepfake scams have robbed companies of millions. Experts warn it could get worse. CNBC. https://www.cnbc.com/2024/05/28/deepfake-scams-have-looted-millions-experts-warn-it-could-get-worse.html

9. OWASP FOUNDATION. (n.d.). DEEPFAKES: a GROWING CYBERSECURITY CONCERN. In OWASP FOUNDATION. https://owasp.org/www-chapter-dorset/assets/presentations/2022-10/OWASP_Deepfakes-A_Growing_Cybersecurity_Concern.pdf

It’s a scary fact that as technology advances, so do the threats we face, with deepfakes being one of the most concerning today. These AI-generated videos, audios, or images can make it seem like someone said or did something they never actually did, leading to serious consequences like spreading misinformation or damaging reputations. With these increasingly realistic manipulations, it’s getting harder to trust what we see and hear, which is truly alarming. While tools to detect deepfakes can help, the real responsibility lies with us. We must stay aware, verify sources, look for signs of fakeness, and use detection tools when necessary. The more informed we are and the more we share this knowledge, the better we can navigate the digital world and protect ourselves from falling victim to these deceptions. So, thanks for spreading the word!

This is very serious! I mean the level of mastery of these deepfake tools doesn’t discriminate between how experienced you are with IT or not, yes, that’s how good they are! An interesting yet heartbreaking experience of this subject is a French woman who fell for what people called ” AI Brad Pit”. A deepfake AI tool was used to create pictures and videos of popular actor Brad Pit being hospitalized and needing the financial assistance of the victim. The victim realised she was defrauded when the real Brad Pit made a headline with his girlfriend. It was said that the victim lost a whooping 830,000 euros to this scam. for further detail into this blog, you can access the links below.

https://www.bbc.com/news/articles/ckgnz8rw1xgo

https://www.france24.com/en/live-news/20250114-french-woman-faces-cyberbullying-after-falling-for-fake-brad-pitt

In conclusion, I can say this issue is given less attention than it deserves. As the use of AI grows and gets more complicated, so will its threats. I believe we as students should take an interest in this subject and help check the abuse of useful technologies like this.

I found your article really compelling, Saniya. The explanation of deepfake technology and the real-life example of the Hong Kong incident- really something. It’s amazing and alarming how deepfakes can be used for both good and bad purposes. Given the potential risks, deepfakes can be exploited for disinformation campaigns and social engineering attacks, as you mentioned. This underscores the necessity for robust verification methods and stringent regulatory measures to mitigate the misuse of such advanced technologies. Thanks for sharing this important information!

Great post, Saniya. As AI technology becomes better and better, it’s going to be nearly impossible to tell deepfakes from reality. Your point about better detection technology is pertinent, and it seems increasingly likely that the solution to AI weaponized for purposes like Social Engineering is going to be fighting fire with fire, and training AI to detect such fraudulent activity. Unfortunately, AI was rather haphazardly released by companies like OpenAI, without waiting for proper governmental oversight and legislation to try and—if not curb its use for nefarious purposes—at least mandate the development of defense tools to detect it.

Interesting and thought provoking post Saniya! With the speed of technology advancement and the risks that comes with this, we need to find a balance with efficiency and security! AI has its benefits and downsides! Security awareness should be introduced as early as elementary to help keep young generations from falling prey to one of these AI inventions such as Deepfakes and keep our society safer.

Great work Saniya!! The Hong Kong incident serves as a potent illustration of how deepfakes take advantage of trust, highlighting the necessity of stronger legal frameworks, employee training, and improved detection systems. Your focus on preventative strategies like multi-factor authentication and awareness campaigns provides useful and doable advice for reducing these risks in the current digital environment.

This is such an interesting and relevant article Saniya! I recently watched a TV series called The Capture, which does a great job of showing how deepfake technology and live video manipulation can be used to deceive people in real time. It was really interesting to see how easily technology can be used to fabricate convincing fake videos that can influence decisions, something very similar to the Hong Kong incident mentioned in the blog. In my opinion, it’s really important to be mindful of what we share online. I believe we should keep our social media circles limited to people we personally know and trust, so that our pictures and personal information don’t fall into the wrong hands. With deepfake technology advancing so rapidly, staying informed and taking precautions to protect our digital identity is more important than ever.

Concerns about the negative consequences of a deepfake attack are legitimate. Thank you, Saniya, for sharing. Criminals are manipulating video and audio to deceive companies and steal data, money, or both. The Hong Kong event underlines the growing threats posed by deepfake technology, particularly in corporate environments where trust is essential. The fact that scammers were able to effectively imitate high-ranking authorities in a video conversation and trick a finance employee into transferring such a huge sum of money is very concerning. Deepfake technology’s sophistication is likely to evolve further, so businesses will need to keep up with advances in both security and AI technology to stay ahead of new sorts of fraud.

Really insightful post on deepfake, Saniya! It is amazing how far the technology has come with lot of possibilities, risk and threat. When you think about the misuse of mentioned Hongkong incident, it again comes with MFA and remote office stuffs. It is definitely a technology that needs to be handled with caution, control and measures. Looking forward to seeing how the industry and lawmakers address these challenges.

Nice post, Saniya! Now a days Deepfakes are a serious threat in enabling misinformation to spread and facilitating fraud, as in the case in Hong Kong you mentioned. These technologies damage trust in the media, twist public opinion, and can cause huge financial and reputational harm. For this, one would need a multidimensional approach including the development of AI-powered detection tools, increasing public awareness, strengthening the legal framework, responsible AI development, and empowerment and awareness of individuals to critically evaluate information.

Great insights! Deepfakes are truly one of the most alarming technological advancements today. These AI-generated manipulations can convincingly mimic real people, leading to misinformation, reputational damage, and even financial fraud, as seen in the $25 million Hong Kong incident. This highlights the pressing need for stronger detection technologies, robust verification processes, and proactive regulations to curb misuse. As mentioned, education and awareness are key. Security training should start early to equip future generations against these threats. On an individual level, verifying sources, using multi-factor authentication, and staying informed can make a huge difference. AI’s role in both creating and combating deepfakes underscores its dual nature. While advancements are exciting, they must be paired with ethical use and proper oversight. Thank you for shedding light on this critical topic awareness is the first step toward a safer digital future!

Well done on highlighting the growing threat of deepfakes, weaving together technical explanations, real-world examples, and practical countermeasures.

GANs, used to create deepfakes, enable individuals with minimal technical expertise to create convincing deepfakes. This democratization of technology significantly amplifies the threat, making it easier for malicious actors to exploit this capability for harm. It’s no longer the tech-savvy adversary, it could be a former friend, a peer from the same classroom in your grade 10 class who thinks it’s funny, it could also be your ex wanting to inflict pain and embarrassment. This type of accessibility makes deepfakes quite worrysome.

I think that tech companies, governments, and academic institutions must work together to develop and deploy detection algorithms while fostering widespread awareness campaigns that extend beyond organizational silos. Some of the comments are mentioning legal and regulatory responses, and while I agree – I also believe it is difficult as we haven’t developed that far as society. This was the danger of releasing AI so quickly and without predetermined regulation and control. Perhaps we could look into GDPR or HIPAA, evolve those frameworks to address deepfake-related crimes.

Overall, this is an urgent issue that needs attention across the board from everyone – from individuals to organizations and policymakers. Combating the threats posed by deepfakes requires a collective effort that combines technological innovation, robust regulatory frameworks, and widespread education.

Thank you for such a thoughtful comment! You’re absolutely right—the accessibility of deepfake tech makes it a much bigger threat, and tackling it will take collaboration across tech companies, governments, and academic institutions. Detection tools, awareness campaigns, and evolving frameworks like GDPR are all great steps forward. I also agree that releasing AI without proper safeguards has left us playing catch-up. It’s definitely an issue that needs urgent, collective action—thank you for highlighting that so well!

Awesome post, I really like how you broke down the positives and negatives uses of deepfakes and highlighted that they’re not just used for malicious purposes. Deepfakes have been getting more and more common it seems. I feel when the technology first started to come out, they weren’t that great and took a lot of time and resources to create but now with the AI boom and faster computers anyone with enough effort can make them. This also highlights the social media lecture we had for this class, as the more you post about yourself the more you expose yourself to malicious actors making a deepfake of you for whatever purposes.

You’re so right—deepfakes have really come a long way, and with the advancements in AI, they’ve become so much more accessible and convincing too.It feels like anyone with enough determination can create one now. It’s crazy how something that used to take so much effort is now so accessible! And yeah, the connection to the social media lecture is spot on—it’s such a good reminder to think twice about how much we share online. Glad you liked the breakdown!

Great post Saniya, every time I hear about deep fakes, I just get more and more scared. It feels like the technology is at a point of no return. Its gotten to point where people can be easily fooled, but people aren’t getting better at identifying deep fakes. I wonder with the rate of improvement of deep fakes if identifying them is even possible. I feel like a safe assumption is that everything is fake, no matter how bleak that sound. I think we need to work on systems that we can use to identify each other in that landscape, like biometrics. In the case of the Hong Kong incident, I feel like it could have been avoided if the scammer had to bypass a form of biometric check. This feels like the only solution to me, but I am not sure how feasible or fast we can make this a reality.